Multithreading example in C#

There is a certain pattern I tend to lean on when performing multithreading operations. C# exposes some very useful functionality for dealing with multithreading problems – in this example I’m going to take a look at the ThreadPool class.

For this example I’m going to calculate Fibonacci numbers – this is an easy example since the numbers can be calculated in parallel and the order in which we calculate the numbers are unimportant.

ThreadPool

The ThreadPool class allows us to delegate the use of multiple threads to the .NET framework. This means that the .NET framework will decide how many threads should be used to perform the specified operation and how threads should be re-used. If we didn’t have this functionality we would have to manually decide when to create and re-use threads. As we will see in the example the .NET framework does this in an optimal way.

To request that a work item be handled by a thread in the thread pool we call the QueueUserWorkItem method, which accepts a delegate. Since we want each delegate/method to execute in a specific context I usually create a separate class and then create an instance of the class for each multithreaded operation. In this example I created a class called ‘Worker’ with a delegate called ‘Calculate’.

public class Worker

{

private readonly int nthNumber;

public Worker(int nthNumber)

{

this.nthNumber = nthNumber;

}

public void Calculate(object o)

{

Console.WriteLine("Fibonacci {0} is {1}", nthNumber, Fibonacci(nthNumber));

}

internal static int Fibonacci(int n)

{

if (n == 0)

{

return 0;

}

else if (n == 1)

{

return 1;

}

else

{

return Fibonacci(n - 1) + Fibonacci(n - 2);

}

}

}That’s the first step. Now we need to be able to tell when all the threads have completed. The main thread in our application will need to block and wait for all operations to complete.

Thread synchronization

One way of implementing this functionality would be to save all the results to a list and have the main thread check the results every second to see if all the results have been calculated. This is obviously not the ideal situation since we will be wasting a massive amount of resources by running this check and we would also need to synchronize access to the results list.

Luckily for us C# exposes a number of thread synchronization techniques. The one I’m using in this example is the EventWaitHandle class. The analogy I use for explaining this structure is that of a turnstile – you have to wait at the turnstile until someone else allows you to pass through. In this case the main application thread will wait until all the threads have completed – the last thread to complete simply needs to signal the turnstile or EventWaitHandle.

Now we have the final problem – a thread needs to know that it is the last thread to complete. To do this I simply declare a static counter inside my worker class – I increment the variable every time a worker is created and decrement the variable every time the worker completes. The only trick is that we need to increment and decrement the counter as an atomic operation – we can do this by using the Interlocked.Increment and Interlocked.Decrement methods.

internal static readonly EventWaitHandle AllWorkersCompleted = new EventWaitHandle(false, EventResetMode.AutoReset);

private static int numberOfWorkers = 0;

private readonly int nthNumber;

public Worker(int nthNumber)

{

Interlocked.Increment(ref numberOfWorkers);

this.nthNumber = nthNumber;

}

public void Calculate(object o)

{

Console.WriteLine("Fibonacci {0} is {1}", nthNumber, Fibonacci(nthNumber));

if (Interlocked.Decrement(ref numberOfWorkers) == 0)

{

AllWorkersCompleted.Set();

}

}Now we simply need create a worker instance for each number we want to calculate and use the ThreadPool class to schedule each calculation.

Performance

To test the performance of my application I calculated the first 44 Fibonacci numbers – first using a single thread and then in parallel. On my laptop (Intel Core Duo 2.2 GHz with 4GB RAM) the single-threaded calculations complete in about 80 seconds and the multi-threaded calculations in about 50 seconds.

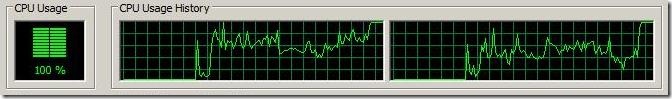

The really cool part (in my opinion) is taking a look at task manager while executing the multi-threaded operations.

No matter how many CPU’s you have the ThreadPool will manage to completely max out CPU usage until the operation completes. Pretty cool.

Happy coding.